This is the notes that accompany my presentation called Docker, the Future of DevOps. It turned out, quite fittingly, to be a whale-sized article :).

What Is Docker And Why Should You Care?

Contrary to many others I believe that saying that Docker is a lightweight

virtual machine is a very good description. Another way to look at Docker is

chroot on steroids. The last explanation probably doesn't help much unless

you know what chroot is.

Chroot is an operation that changes the apparent root directory for the current running process and their children. A program that is run in such a modified environment cannot access files and commands outside that environmental directory tree. This modified environment is called a chroot jail. -- From Archwiki, chroot

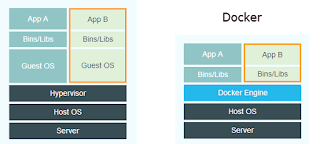

VM vs. Docker

The image describes the difference between a VM and Docker. Instead of a hypervisor with Guest OSes on top, Docker uses a Docker engine and containers on top. Does this really tell us anything? What is the difference between a "hypervisor" and the "Docker engine"? A nice way of illustrating this difference is through listing the running processes on the Host.

The following simplified process trees illustrates the difference.

On the Host running the VM there is only one process running on the Host even though there are many processes running in the VM.

# Running processes on Host for a VM $ pstree VM -+= /VirtualBox.app |--= coreos-vagrant

On the Host running the Docker Engine all the processes running are visible.

The contained processes are running on the Host! They can be inspected and

manipulated with normal commands like, ps, and kill.

# Running processes on Host for a Docker Engine $ pstree docker -+= /docker |--= /bin/sh |--= node server.js |--= go run app |--= ruby server.rb ... |--= /bin/bash

Now when everything is crystal clear, what does this mean? It means that Docker containers are smaller, faster, and more easily integrated with each other than VMs as the table illustrates.

The size of a small virtual machine image with Core OS is about 1.2 GB. The size of a small container with busybox is 2.5 MB.

The startup time of a fast virtual machine is measured in minutes. The startup time of a container is often less than a second.

Integrating virtual machines running on the same host must be done by setting up the networking properly. Integrating containers is supported by Docker out of the box.

So, containers are lightweight, fast and easily integrated, but that is not all.

Docker is a Contract

Docker is also the contract between Developers and Operations. Developers and Operations often have very different attitudes when it comes to choosing tools and environments.

Developers want to use the next shiny thing, we want to use Node.js, Rust, Go, Microservices, Cassandra, Hadoop, blablabla, blablabla, ...

Operations want to use the same as they used yesterday, what they used last year, because it is proven, it works!

(Yes, I know this is stereotypical, but there is some truth in it :)

But, this is where Docker shines. Operations are satisfied because they only have to care about one thing. They have to support deploying containers. Developers are also happy. They can develop with whatever the fad of the day is and then just stick it into a container and throw it over the wall to Operations. Yippie ki-yay!

But, it does not end here. Since Operations are, usually, better than development when it comes to optimizing for production, they can help developers build optimized containers that can be used for local development. Not a bad situation at all.

Better Utilization

A few years ago, before virtualization, when we needed to create a new service, we had to acquire an actual machine, hardware. It could take months, depending on the processes of the company you were working for. One the server was in place we created the service and most of the time it did not become the success we were hoping for. The machine was ticking along with a CPU utilization of 5%. Expensive!

Then, virtualization entered the arena and it was possible to spin up a new machine in minutes. It was also possible to run multiple virtual machines on the same hardware so the utilization increased from 5%. But, we still need to have a virtual machine per service so the we cannot utilize the machine as much as we would want.

Containerization is the next step in this process. Containers can be spun up in seconds and they can be deployed at a much more granular level than virtual machines.

Dependencies

It is indeed nice that Docker can help us speed up our slow virtual machines but why can't we just deploy all our services on the same machine? You already know the answer, dependency hell. Installing multiple independent services on a single machine, real or virtual, is a recipe for disaster. Docker Inc. calls this the matrix of hell.

Docker eliminates the matrix of hell by keeping the dependencies contained inside the containers.

Speed

Speed is of course always nice, but being 100 times faster is not only nice, it changes what is possible. This much increase enables whole new possibilities. It is now possible to create throw-away environments. Need to change your entire development environment from Golang to Clojure? Fire up a container. Need to provide a production database for integration and performance testing? Fire up a container. Need to switch the entire production server from Apache to Nginx? Fire up a container!

How Does Docker Work?

Docker is implemented as a client-server system; The Docker daemon runs on the Host and it is accessed via a socket connection from the client. The client may, but does not have to, be on the same machine as the daemon. The Docker CLI client works the same way as any other client but it is usually connected through a Unix domain socket instead of a TCP socket.

The daemon receives commands from the client and manages the containers on the Host where it is running.

Docker Concepts and Interactions

- Host, the machine that is running the containers.

- Image, a hierarchy of files, with meta-data for how to run a container.

- Container, a contained running process, started from an image.

- Registry, a repository of images.

- Volume, storage outside the container.

- Dockerfile, a script for creating images.

We can build an image from a Dockerfile. We can also create an image by

commiting a running container. The image can be tagged and it can be

pushed to and pulled from a registry. A container is started by runing or

createing an image. A container can be stopped and started. It can be

removed with rm.

Images

An image is a file structure, with meta-data for how to run a container. The

image is built on a union filesystem, a filesystem built out of layers. Every

command in the Dockerfile creates a new layer in the filesystem.

When a container is started all images are merged together into what appears to the process as unified. When files are removed in the union file system they are only marked as deleted. The files will still exist in the layer where they were last present.

# Commands for interacting with images $ docker images # shows all images. $ docker import # creates an image from a tarball. $ docker build # creates image from Dockerfile. $ docker commit # creates image from a container. $ docker rmi # removes an image. $ docker history # list changes of an image.

Image Sizes

Here are some data on commonly used images:

scratch- this is the ultimate base image and it has 0 files and 0 size.busybox- a minimal Unix weighing in at 2.5 MB and around 10000 files.debian:jessie- the latest Debian is 122 MB and around 18000 files.ubuntu:14.04- Ubuntu is 188 MB and has around 23000 files.

Creating images

Images can be created with docker commit container-id, docker import

url-to-tar, or docker build -f Dockerfile .

# Creating an image with commit $ docker run -i -t debian:jessie bash root@e6c7d21960:/# apt-get update root@e6c7d21960:/# apt-get install postgresql root@e6c7d21960:/# apt-get install node root@e6c7d21960:/# node --version root@e6c7d21960:/# curl https://iojs.org/dist/v1.2.0/iojs-v1.2.0- linux-x64.tar.gz -o iojs.tgz root@e6c7d21960:/# tar xzf iojs.tgz root@e6c7d21960:/# ls root@e6c7d21960:/# cd iojs-v1.2.0-linux-x64/ root@e6c7d21960:/# ls root@e6c7d21960:/# cp -r * /usr/local/ root@e6c7d21960:/# iojs --version 1.2.0 root@e6c7d21960:/# exit $ docker ps -l -q e6c7d21960 $ docker commit e6c7d21960 postgres-iojs daeb0b76283eac2e0c7f7504bdde2d49c721a1b03a50f750ea9982464cfccb1e

As you can see from the above session, it is possible to create images with

docker commit but it is kind messy and it is hard to reproduce. It is better

to create images with Dockerfiles since they are clear and are easily

reproduced.

FROM debian:jessie # Dockerfile for postgres-iojs RUN apt-get update RUN apt-get install postgresql RUN curl https://iojs.org/dist/iojs-v1.2.0.tgz -o iojs.tgz RUN tar xzf iojs.tgz RUN cp -r iojs-v1.2.0-linux-x64/* /usr/local

Build it with

$ docker build -tag postgres-iojs .

Since every command in the Dockerfile creates a new layer it is often better

to run similar commands together. Group the commands with and and split them

over several lines for readability.

FROM debian:jessie # Dockerfile for postgres-iojs RUN apt-get update && \ apt-get install postgresql && \ curl https://iojs.org/dist/iojs-v1.2.0.tgz -o iojs.tgz && \ tar xzf iojs.tgz && \ cp -r iojs-v1.2.0-linux-x64/* /usr/local

The ordering of the lines in the Dockerfile is important as Docker caches the

intermediate images, in order to speed up image building. Order your

Dockerfile by putting the lines that change more often at the bottom of the

file. ADD and COPY get special treatment from the cache and are re-run whenever

an affected file changes even though the line does not change.

Dockerfile Commands

The Dockerfile supports 13 commands. Some of the commands are used when

you build the image and some are used when you run a container from the image.

Here is a table of the commands and when they are used.

BUILD Commands

FROM- The image the new image will be based on.MAINTAINER- Name and email of the maintainer of this image.COPY- Copy a file or a directory into the image.ADD- Same as COPY, but handle URL:s and unpack tarballs automatically.RUN- Run a command inside the container, such asapt-get install.ONBUILD- Run commands when building an inherited Dockerfile..dockerignore- Not a command, but it controls what files are added to the build context. Should include.gitand other files not needed when building the image.

RUN Commands

CMD- Default command to run when running the container. Can be overridden with command line parameters.ENV- Set environment variable in the container.EXPOSE- Expose ports from the container. Must be explicitly exposed by the run command to the Host with-por-P.VOLUME- Specify that a directory should be stored outside the union file system. If is not set withdocker run -vit will be created in/var/lib/docker/volumesENTRYPOINT- Specify a command that is not overridden by giving a new command withdocker run image cmd. It is mostly used to give a default executable and use commands as parameters to it.

Both BUILD and RUN Commands

USER- Set the user for RUN, CMD and ENTRYPOINT.WORKDIR- Sets the working directory for RUN, CMD, ENTRYPOINT, ADD and COPY.

Running Containers

When a container is started, the process gets a new writable layer in the union file system where it can execute.

Since version 1.5, it is also possible to make this top layer read-only, forcing us to use volumes for all file output such as logging, and temp-files.

# Commands for interacting with containers $ docker create # creates a container but does not start it. $ docker run # creates and starts a container. $ docker stop # stops it. $ docker start # will start it again. $ docker restart # restarts a container. $ docker rm # deletes a container. $ docker kill # sends a SIGKILL to a container. $ docker attach # will connect to a running container. $ docker wait # blocks until container stops. $ docker exec # executes a command in a running container.

docker run

As the list above describes, docker run is the command used to start new

containers. Here are some common ways to run containers.

# Run a container interactively $ docker run -it --rm ubuntu

This is the way to run a container if you want to interact with it as a normal

terminal program. If you want to pipe into the container, you should not use the

-t option.

--interactive (-i)- send stdin to the process.-tty (-t)- tell the process that a terminal is present. This affects how the process outputs data and how it treats signals such as (Ctrl-C).--rm- remove the container on exit.ubuntu- use theubuntu:latestimage.

# Run a container in the background $ docker run -d hadoop

--detached (-d)- Run in detached mode, you can attach again withdocker attach

docker run --env

# Run a named container and pass it some environment variables $ docker run \ --name mydb \ --env MYSQL_USER=db-user \ -e MYSQL_PASSWORD=secret \ --env-file ./mysql.env \ mysql

--name- name the container, otherwise it gets a random name.-env (-e)- Set the environment variable in the container--env-file- Set all environment variables inenv-filemysql- use themysql:latestimage.

docker run --publish

# Publish container port 80 on a random port on the Host $ docker run -p 80 nginx # Publish container port 80 on port 8080 on the Host $ docker run -p 8080:80 nginx # Publish container port 80 on port 8080 on the localhost interface on the Host $ docker run -p 127.0.0.1:8080:80 nginx # Publish all EXPOSEd ports from the container on random ports on the Host $ docker run -P nginx

The nginx image, for example, exposes port 80 and 443.

1 FROM debian:wheezy 2 3 MAINTAINER NGINX "docker-maint@nginx.com" 21 22 EXPOSE 80 443 23 ``` ### docker run --link

# Start a postgres container, named mydb $ docker run --name mydb postgres # Link mydb as db into myqpp $ docker run --link mydb:db myapp

Linking a container sets up networking from the linking container into the linked container. It does two things:

- It updates the /etc/hosts with the link name given to the container,

dbin the example above. Making it possible to access the container by the namedb. This is very good. - It creates environment variables for the EXPOSEd ports. This is practically useless since I can access the same port by using a hostname:port combination anyway.

The linked networking is not constrained by the ports EXPOSEd by the image. All ports are available to the linking container.

docker run limits

It is also possible to limit how much access the container has to the Host's resources.

# Limit the amount of memory $ docker run -m 256m yourapp # Limit the number of shares of the CPU this process uses (out of 1024) $ docker run --cpu-shares 512 mypp # Change the user for the process to www instead of root (good for security) $ docker run -u=www nginx

Setting CPU shares to 512 out of 1024 does not mean that the process gets access to half of the CPU, it means that it gets half as many shares as a container that is run without any limit. If we have two containers running with 1024 shares and one with 512 shares the 512-container will get about 1 fifth of the CPU shares.

docker exec container

docker exec allows us to run commands inside already running containers.

This is very good for debugging among other things.

# Run a shell inside the container with id 6f2c42c0 $ docker exec -it 6f2c42c0 sh

Volumes

Volumes provide persistent storage outside the container. That means the data will not be saved if you commit the new image.

# Start a new nginx container with /var/log as a volume $ docker run -v /var/log nginx

Since the directory of the host is not given, the volume is created in

/var/lib/docker/volumes/ec3c543bc..535.

The exact name of the directory can be found by running docker inspect

container-id.

# Start a new nginx container with /var/log as a volume mapped to /tmp on Host $ docker run -v /tmp:/var/log nginx

It is also possible to mount volumes from another container with

--volumes-from.

# Start a db container $ docker run -v /var/lib/postgresql/data --name mydb postgres # Start a backup container with the volumes taken from the mydb container $ docker run --volumes-from mydb backup

Docker Registries

Docker Hub is the official repository for images. It supports public (free) and private (fee) repositories. Repositories can be tagged as official and this means that they are curated by the maintainers of the project (or someone connected with it).

Docker Hub also supports automatic builds of projects hosted on Github and Bitbucket. If automatic build is enabled an image will automatically be built every time you push to your source code repository.

If you don't want to use automatic builds, you can also docker push directly

to Docker Hub. docker pull will pull images. docker run with an image that

does not exist locally will automatically initiate a docker pull.

It is also possible to host your images elsewhere. Docker maintains code for docker-registry on Github. But, I have found it to be slow and buggy.

Quay, Tutum, and Google also provides hosting of private docker images.

Inspecting Containers

A lot of commands are available for inspecting containers:

$ docker ps # shows running containers. $ docker inspect # info on a container (incl. IP address). $ docker logs # gets logs from container. $ docker events # gets events from container. $ docker port # shows public facing port of container. $ docker top # shows running processes in container. $ docker diff # shows changed files in container's FS. $ docker stats # shows metrics, memory, cpu, filsystem

I will only elaborate on docker ps and docker inspect since they are the

most important ones.

# List all containers, (--all means including stopped)

$ docker ps --all

CONTAINER ID IMAGE COMMAND NAMES

9923ad197b65 busybox:latest "sh" romantic_fermat

fe7f682cf546 debian:jessie "bash" silly_bartik

09c707e2ec07 scratch:latest "ls" suspicious_perlman

b15c5c553202 mongo:2.6.7 "/entrypo some-mongo

fbe1f24d7df8 busybox:latest "true" db_data

# Inspect the container named silly_bartik

# Output is shortened for brevity.

$ docker inspect silly_bartik

1 [{

2 "Args": [

3 "-c",

4 "/usr/local/bin/confd-watch.sh"

5 ],

6 "Config": {

10 "Hostname": "3c012df7bab9",

11 "Image": "andersjanmyr/nginx-confd:development",

12 },

13 "Id": "3c012df7bab977a194199f1",

14 "Image": "d3bd1f07cae1bd624e2e",

15 "NetworkSettings": {

16 "IPAddress": "",

18 "Ports": null

19 },

20 "Volumes": {},

22 }]

Tips and Tricks

To get the id of a container is useful for scripting.

# Get the id (-q) of the last (-l) run container $ docker ps -l -q c8044ab1a3d0

docker inspect can take a format string, a Go template, and it allows you to

be more specific about what data you are interested in. Again, useful for

scripting.

$ docker inspect -f '{{ .NetworkSettings.IPAddress }}' 6f2c42c05500

172.17.0.11

Use docker exec to interact with a running container.

# Get the environment variables of a running container. $ docker exec -it 6f2c42c05500 env PATH=/usr/local/sbin:/usr... HOSTNAME=6f2c42c05500 REDIS_1_PORT=tcp://172.17.0.9:6379 REDIS_1_PORT_6379_TCP=tcp://172.17.0.9:6379 ...

Use volumes to avoid having to rebuild an image every time you run it. Every time the below Dockerfile is built it copies the current directory into the container.

1 FROM dockerfile/nodejs:latest 2 3 MAINTAINER Anders Janmyr "anders@janmyr.com" 4 RUN apt-get update && \ 5 apt-get install zlib1g-dev && \ 6 npm install -g pm2 && \ 7 mkdir -p /srv/app 8 9 WORKDIR /srv/app 10 COPY . /srv/app 11 12 CMD pm2 start app.js -x -i 1 && pm2 logs 13

# Build and run the image $ docker build -t myapp . $ docker run -it --rm myapp

To avoid the rebuild, build the image once and then mount the local directory when you run it.

$ docker run -it --rm -v $(PWD):/srv/app myapp

Security

You may have heard that it is not secure to use Docker. This is not untrue, but it does not have to be a problem.

The following security problems currently exists with Docker.

- Image signatures are not properly verified.

- If you have

rootin a container you can, potentially, get root on the entire box.

Security Remedies

- Use trusted images from your private repositories.

- Don't run containers as root, if possible.

- Treat root in a container as root outside a container

If you own all the containers running on the server, you don't have to worry about them interacting with each other maliciously.

Container "Options"

I put "options" in quotes since there are not really any options at the moment,

but a lot of players want to get in the game. Ubuntu is working on something

called LXD and Microsoft on something called Drawbridge. But, the one that

seems most interesting is the one called Rocket.

Rocket is developed by Core OS, who is a big container (Docker) platform. The reason for developing it is that they feel that Docker Inc. are bloating Docker and also that they are moving into the same area as Core OS, which is container hosting in the cloud.

With this new container specification they are trying to remove some of the warts which Docker has for historical reasons and to provide a simple container with support for socket activation and security built in from the start.

Orchestration

When we split up our application into multiple different containers we get some new problems. How do we make the different parts talk to each other? On a single host? On multiple hosts?

Docker solves the problem of orchestration with on single host with links.

To simplify the linking of containers Docker provides a tool called

docker-compose. It was previously called fig and was developed by another

company which was recently acquired by Docker.

docker-compose

docker-compose declares the information for multiple containers in a single

file, docker-compose.yml. Here is an example of a file that manages two

containers, web and redis.

1 web: 2 build: . 3 command: python app.py 4 ports: 5 - "5000:5000" 6 volumes: 7 - .:/code 8 links: 9 - redis 10 redis: 11 image: redis

To start the above containers, you can run the command docker-compose up.

1 $ docker-compose up 2 Pulling image orchardup/redis... 3 Building web... 4 Starting figtest_redis_1... 5 Starting figtest_web_1... 6 redis_1 | [8] 02 Jan 18:43:35.576 # Server 7 started, Redis version 2.8.3 8 web_1 | * Running on http://0.0.0.0:5000/

It is also possible to start the containers in detached mode with

docker-compose up -d and I can find out what containers are running with

docker-compose ps.

1 $ docker-compose up -d 2 Starting figtest_redis_1... 3 Starting figtest_web_1... 4 $ docker-compose ps 5 Name Command State Ports 6 ------------------------------------------------------------ 7 figtest_redis_1 /usr/local/bin/run Up 8 figtest_web_1 /bin/sh -c python app.py Up 5000->5000

It is possible to run commands that work with a single container or commands that work with all containers at once.

1 # Get env variables for web container 2 $ docker-compose run web env 3 # Scale to multiple containers 4 $ docker-compose scale web=3 redis=2 5 # Get logs for all containers 6 $ docker-compose logs

As you can see from the above commands, scaling is supported. The application must be written in a way that can handle multiple containers. Load-balancing is not supported out of the box.

Docker Hosting

A number of companies want to get in on the business of hosting Docker in the cloud. The image below shows a collection.

These provider try to solve different problems, from simple hosting to becoming a "cloud operating system". I will only elaborate on two of them

Core OS

As the image shows, Core OS is a collection of services to enable hosting of multiple containers in a Core OS cluster.

- The Core OS Linux distribution is a stripped down Linux. It uses 114MB of RAM on initial boot. It does not provide a package manager, since it uses Docker or their own Rocket container to run everything.

- Core OS uses Docker (or Rocket) to install an application on a host.

- It uses

systemdas init-service since it has great performance, handles start-up dependencies well, has great logging, and supports socket-activation. etcdis a distributed, consistent key value store for shared configuration and service discovery.fleetis a cluster manager. It is an extension ofsystemdto work with multiple machines. It usesetcdto manage configuration and it is running on every Core OS machine.

AWS

It is possible to host Docker containers on Amazon in two ways.

- Elastic Beanstalk can deploy Docker containers. This works fine but I find it to be very slow. A new deploy takes several minutes and it does not feel right when a container can be started in seconds.

- ECS, Elastic Container Service, is Amazon's upcoming container cluster solution. It is currently in preview 3 and it looks very promising. Just as with Amazon's other services, you interact with it through simple web service calls.

Summary

- Docker is here to stay.

- It fixes dependency hell.

- Containers are fast!

- Cluster solutions exists, but don't expect them to be seamless, yet!

No comments:

Post a Comment